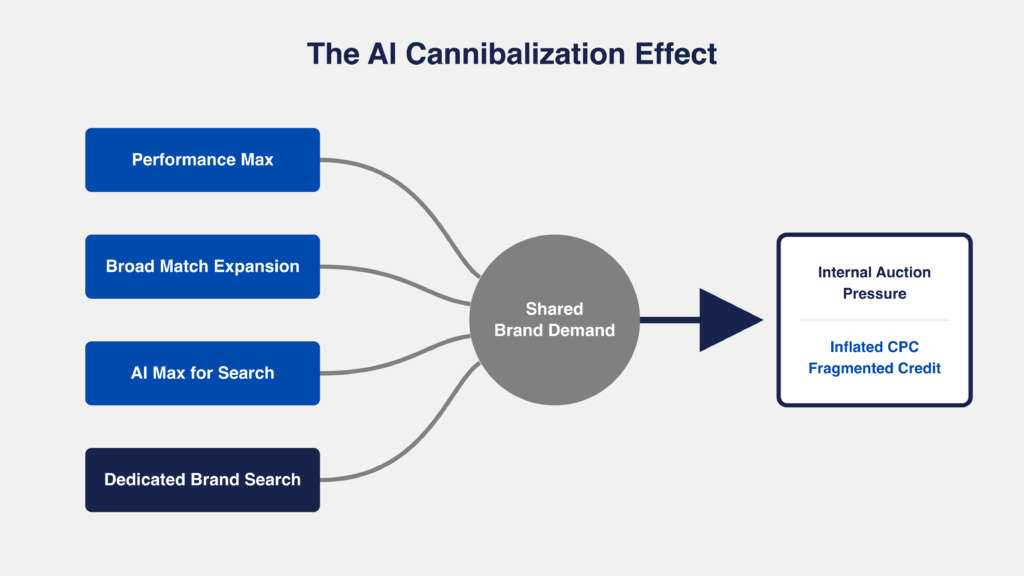

Google’s AI products don’t coordinate, they compete. Performance Max, Broad Match, and AI Max for Search campaigns all chase the same high-converting brand queries, creating internal auction pressure that raises costs per click (CPCs) and distorts performance.

The solution is structural: enforce single-campaign ownership of brand through negatives, exclusions, and match type control so demand is captured once, measured cleanly, and scaled efficiently.

How Three AI Products End Up in the Same Brand Auction

Performance Max (PMax), Broad Match, and AI Max for Search campaigns all independently gravitate toward brand queries because they optimize for conversion probability, not incrementality, and none of them coordinate with each other. The result is multiple campaigns from the same account entering the same brand auctions, bidding against each other on traffic the advertiser would likely have won with a single campaign.

This is predictable. Every automation product in Google Ads is designed to find conversions as efficiently as possible.

PMax is a heat-seeking missile for conversion signals. It doesn’t distinguish between a net-new customer and someone who already has your URL bookmarked. Broad Match expands into semantically related queries, including branded variants. AI-driven query expansion generates new search paths based on conversion probability, not intent quality.

Brand sits at the center of all three. It converts faster, more reliably, and with clearer signals than any non-brand query set. That makes brand the path of least resistance for every automated system in the account.

Because these systems operate independently, they all converge on the same demand. Each system reaches brand differently and exposes different levels of visibility and control:

| AI Product | Targets Brand by Default? | Query-Level Visibility | Exclusion Method |

| Performance Max | Yes, prioritizes high-converting queries | Search terms report (with privacy thresholds) + Search Themes | Account-level brand exclusions (entity-based) + campaign-level negative keywords (up to 10,000) |

| Broad Match | Yes, semantic expansion includes brand | Search terms report (with privacy thresholds) | Negative keywords (shared lists recommended) |

| AI Max for Search | Yes, auto-generated queries from signals | Partial (search terms + auto-created themes) | Campaign-level controls + negatives |

The most visible version of this is what operators experience as the PMax Vacuum. You launch Performance Max to drive incremental growth: Shopping expansion, YouTube reach, net-new demand. But within weeks, brand begins surfacing in PMax → Insights → Search Themes. At the same time, your Brand Search campaign softens: impression share declines, CPCs rise, and conversion volume holds but redistributes.

Google isn’t going to tap you on the shoulder and tell you you’re lighting margin on fire; the system is designed to reward this overlap with ‘green’ dashboards. You have to find the signal in the noise of the data:

- PMax shows strong performance on “generic” themes

- Brand campaign efficiency weakens

- Total account performance stays flat

Don’t blame the algorithm, PMax isn’t ‘broken.’ It’s doing exactly what Google engineered it to do: find the path of least resistance to a conversion. In 90% of accounts, that path is paved with your own brand equity.

When multiple campaigns from the same account are eligible for the same query, Google runs an internal “audition.” Only one ad from your account enters the main auction per ad placement. You’re not literally bidding against yourself in the public marketplace. But the wrong campaign can win that audition: PMax beats your dedicated Brand campaign, claims the click, takes the attribution credit, and serves whatever creative Google assembled instead of your controlled brand ad. The cost isn’t a higher CPC from self-competition. It’s misallocated spend, distorted attribution, and loss of control over what users see when they search your name.

You can see the downstream effects in your brand campaign’s Auction Insights. If impression share drops or overlap rates shift without new external competitors entering, that’s internal reallocation surfacing in data. Your dedicated brand campaign’s Auction Insights is the more reliable diagnostic because PMax Auction Insights are still aggregated across networks. However, PMax channel performance reporting (available since June 2025) now breaks down results by Search, Shopping, Display, YouTube, and other channels, giving you channel-level performance data even when Auction Insights remains aggregated.

This isn’t just a reporting duplication error; it’s a tax on your margins. The wrong campaign wins the internal audition, claims brand credit it didn’t earn, and your actual non-brand growth remains stagnant.

Demand stays constant, but cost and attribution shift. You end up paying more for the same traffic while performance looks stronger than it actually is.

That overlap doesn’t just affect pricing; it also distorts how conversions are measured.

Once multiple campaigns are eligible for the same brand query, attribution distributes credit across them, making campaign-level performance look efficient while account-level efficiency remains flat.

One campaign wins the click, but others can still receive assist or view-through credit depending on attribution settings. That’s where measurement diverges from reality.

At the campaign level, everything looks like it’s working. Multiple campaigns show strong performance, reinforcing the idea that the structure is sound. But at the account level, efficiency doesn’t scale. Incremental spend doesn’t produce incremental conversions, it redistributes credit.

You see it in the inconsistencies:

- Campaign-level conversions don’t reconcile with account totals

- GA4 (Google Analytics 4) shows fewer unique conversion paths than Google Ads

- Branded traffic appears fragmented across multiple campaigns

That messy dashboard isn’t a bug; it’s a feature of an ecosystem that prioritizes credit distribution over incremental growth.

And because the platform never surfaces this overlap directly, detection becomes a reconstruction exercise.

Detecting Brand Cannibalization Campaign by Campaign

Brand cannibalization isn’t visible in a single report. It has to be reconstructed. Search, PMax, and AI-driven expansion each expose partial query data, forcing operators to stitch together signals, patterns, and performance shifts to identify overlap. Effective detection relies on triangulating across systems, not relying on any one source of truth.

Search campaigns provide query-level data (with privacy limits). PMax provides aggregated themes. AI-driven expansion sits somewhere in between. Each system exposes just enough data to evaluate itself but not enough to understand how they overlap.

Detection only works when you reconcile all three.

Checking PMax for Brand Query Absorption

PMax now exposes search queries through search term reports (rolled out in 2025), but visibility still has limits, so brand detection requires combining direct query data with pattern recognition across Search Themes. Start with the PMax search terms report: filter for your brand name, misspellings, and brand + modifier combinations. This is your primary detection tool.

But low-volume queries still hide below privacy thresholds, and some branded traffic won’t surface in the report. That’s where Search Themes (PMax → Insights → Search Themes) remain useful as a supplementary signal.

Themes that appear non-brand like “running shoes” or “high-end footwear” can be heavily composed of branded queries underneath. The search terms report helps you quantify what’s actually brand, but themes that don’t have enough volume to surface individual queries still require pattern recognition.

The clearest signals are:

- A small number of themes driving disproportionate conversions

- Unusually strong return on ad spend (ROAS) concentrated in those themes

- Simultaneous improvement in PMax performance and softening in Brand Search

For deeper analysis, pull search terms data via Google Ads Scripts using AdsApp.search() with GAQL queries filtered by campaign type, then cross-reference against a brand keyword list. The Google Ads API’s SearchTermView resource works for larger accounts running this programmatically. PMax search term data is now accessible through these methods, though visibility is still more limited than Search campaigns for low-volume queries.

The practical approach: export PMax search terms and Search campaign search terms into a shared sheet, flag brand term overlap, and calculate the percentage of PMax conversions coming from brand queries. Cross-reference against Search Themes for patterns that don’t surface at the query level. The most reliable validation is running a PMax experiment with brand exclusions on versus off and comparing incrementality.

Even then, you’re not proving overlap, you’re triangulating it.

Auditing Broad Match Semantic Expansion on Brand

Broad Match pulls in brand queries through semantic expansion, and you won’t catch it by filtering individual terms. While obvious brand queries surface quickly, the real leakage comes from long-tail variants that reappear through close matching.

The search terms report will surface obvious cases like brand terms, misspellings, brand + modifiers, but the real issue is the long tail.

Misspelled brand names, no-space variations, and brand + category combinations are continuously reintroduced through semantic matching. Even queries you’ve already excluded can reappear in slightly altered forms.

This is why operators rely on N-gram analysis. Instead of reviewing individual queries, they identify recurring patterns like brand fragments, modifier combinations, repeated structures that show up across campaigns.

When those patterns appear in both brand and non-brand campaigns, it’s not expansion, it’s fragmentation.

This never fully resolves. New variants keep emerging and low-volume queries stay hidden. You’re not trying to eliminate brand leakage, you’re trying to contain it.

Finding AI Max Overlap in Search Terms

AI-driven query expansion keeps generating new branded variants from conversion signals, so one-time cleanup doesn’t hold. AI Max for Search, now the fastest-growing AI-powered Search ads product, brings keywordless matching to Search campaigns, meaning brand queries can enter non-brand campaigns through yet another automated pathway. Unlike Broad Match, which reacts to queries, AI systems create new ones, reintroducing brand into non-brand campaigns even after cleanup.

Detection becomes a comparison exercise:

- AI-generated queries vs. core brand terms

- Overlaps with Broad Match patterns

- Similarities to PMax themes

If you see a brand variant pop up in a non-brand campaign, you’ve found a leak. If you see ten, your account structure has a structural integrity problem.

What makes this harder is that the system is not static. It continuously generates new variations, which means fixes don’t hold. You remove one set of queries, and new ones emerge based on the same underlying signal.

Without continuous comparison and cleanup, brand demand keeps re-entering through newly generated paths.

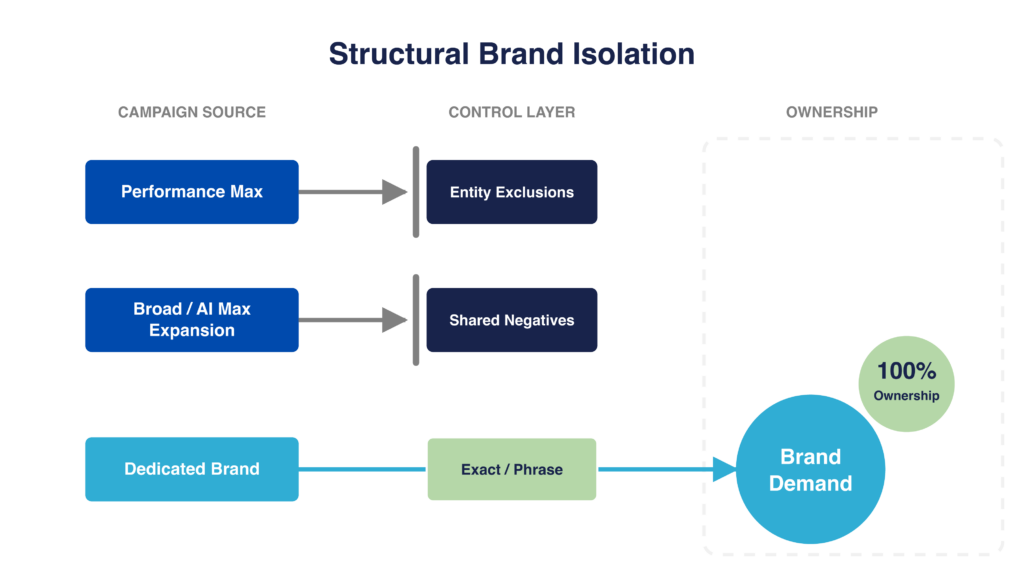

Isolating Brand to a Single Campaign Owner

Eliminating brand cannibalization requires assigning a single campaign full ownership of brand and enforcing that ownership across the account by coordinating controls (negatives, PMax exclusions, and match types) to prevent other campaigns from entering the same auctions. Without that system, brand remains a shared resource and internal competition persists.

This isn’t an ‘optimization’ task you check off once a month; it’s structural governance that protects your unit economics.

As long as multiple campaigns are eligible to serve on brand, the system will continue to:

- Compete internally

- Inflate CPCs

- Fragment attribution

The goal is simple: one query, one owner.

That requires three coordinated controls:

- Shared negatives to block query-level entry

- PMax exclusions to limit entity-level targeting

- Match types to route demand through a single pathway

Each solves a different part of the problem. None work in isolation.

Building Shared Brand Negative Keyword Lists

Shared negative keyword lists act as the enforcement layer that blocks non-brand campaigns from entering brand auctions. Their effectiveness depends on continuous expansion by capturing new variants as they emerge so control holds over time.

That means building a single negative keyword list that captures your core brand terms and applying it across every non-brand campaign: Search, Broad Match, and any campaign using automated expansion.

Build the list in Tools & Settings → Shared Library → Negative Keyword Lists. Create one list called “Brand Negatives” and apply it to every non-brand campaign at the campaign level. Shared negative keyword lists have a documented limit of 5,000 keywords per list, with a maximum of 20 lists per account.

The goal is not just coverage. It’s consistency. Every non-brand campaign should be structurally incapable of entering a brand auction.

Where this breaks down is in execution.

Common mistakes operators make:

- Adding brand negatives at the ad group level instead of campaign level, which means new ad groups don’t inherit them

- Applying negatives to the brand campaign itself by accident (especially in bulk uploads), which kills your own brand coverage

- Using broad match negatives on brand terms, which can over-block and suppress legitimate non-brand traffic that happens to contain a brand fragment

Match type on negatives matters. Use exact match negatives for core brand terms. Use phrase match negatives for brand + modifier patterns. Broad match negatives on brand terms are risky because they can suppress legitimate queries.

Adding your core brand terms is table stakes. What actually determines effectiveness is how quickly you expand coverage based on what the system is doing in real time. That requires shifting from a static list to a feedback loop.

In practice, that loop looks like this:

- Pull search terms from non-brand campaigns on a recurring basis

- Identify new brand variants and patterns (misspellings, modifiers, formatting variations)

- Add them back into the shared negative list

- Reapply across all non-brand campaigns

This is not about catching everything upfront. It’s about tightening the system over time.

The reason this matters is control drift. Even with strong initial coverage, brand queries will re-enter through new variants, especially in campaigns using Broad Match or AI-driven expansion. If the negative list isn’t updated, overlap rebuilds quietly and you’re back to competing against yourself.

The most effective teams treat this like ongoing infrastructure:

- Brand negatives are shared, not campaign-specific

- Updates are driven by observed query behavior, not assumptions

- Maintenance is tied to a regular reporting cadence, not ad hoc cleanup

If you’re running this across multiple accounts in an MCC, shared negative lists don’t propagate across accounts. Each account needs its own list. This is a common ops miss in agency environments.

There are still limits. Some queries won’t surface due to privacy thresholds. New variants will always emerge. And automated systems will continue to test the edges of your exclusions.

But the objective isn’t perfection, it’s containment.

A well-maintained shared negative list doesn’t eliminate brand leakage entirely. It reduces the surface area enough that one campaign can reliably own brand demand, which is what allows bidding, attribution, and reporting to stabilize.

Setting Account-Level Brand Exclusions in PMax

PMax brand exclusions act as a containment layer, limiting Google’s ability to target your brand without providing full query-level control. Because they operate on Google’s internal brand entity system, not keywords, coverage is approximate and requires ongoing validation.

In practice, this is the PMax equivalent of negative keywords. Once applied, it creates a baseline constraint: PMax should no longer intentionally target queries associated with your brand. This is what allows a dedicated brand campaign to start reclaiming ownership of branded demand.

But unlike negative keywords, you’re not working with raw query strings.

PMax exclusions don’t work like traditional negative keywords. Instead of blocking specific search terms, they operate on Google’s internal “brand entity” system: a predefined list of brands that Google has mapped across queries, domains, and user intent.

In practice, that means you’re not uploading your brand keywords (e.g., “your brand,” misspellings, product names). You’re selecting a brand entity that Google has already defined. When you exclude that entity, Google attempts to block queries it believes are associated with that brand.

For example:

- You’re not excluding “nike shoes,” “nike running shoes,” and “nik shoes” individually

- You’re excluding the “Nike” entity, and Google decides which queries fall under that definition

That distinction matters because control shifts from:

- Exact query matching (keywords)

to

- Google’s interpretation of brand intent (entities)

The UI path: Google Ads → Campaign → Settings → Additional settings → Brand Exclusions, then search for your brand entity from Google’s predefined list. If your brand isn’t listed, you submit a brand request through the same UI or via Google support.

That works cleanly for large, well-established brands. It breaks down quickly for everyone else.

If you’re not a household name, you’re stuck in the ‘Brand Request’ purgatory waiting weeks for a support rep to manually validate your entity while PMax continues to cannibalize your search lift. Brand requests can take weeks. During that window, PMax continues to capture brand. Some operators run campaign-level negative keywords as a stopgap (these don’t work the same way as entity exclusions but reduce exposure). For mid-market advertisers, multi-brand portfolios, or companies with evolving naming structures, this becomes a real operational bottleneck.

If you manage a portfolio of brands (parent company + sub-brands + product lines), each needs its own entity exclusion. Google doesn’t auto-cascade from parent brand to sub-brands. Missing one sub-brand means PMax still captures that traffic.

Even once your brand is available, coverage is approximate.

The exclusion applies at the entity level, not the query level. That means:

- Some modifiers may still slip through

- Product names may not be fully mapped to the parent brand

- Misspellings and long-tail variants can remain eligible

- Comparison queries (e.g., “Nike vs Adidas running shoes”) may not be blocked because Google interprets them as non-brand intent

So while exclusions reduce brand capture, they don’t eliminate it.

This shifts the role of PMax exclusions from a hard stop to a containment layer.

Important: PMax now supports both campaign-level negative keywords (up to 10,000 per campaign, increased from 100 in March 2025) and shared negative keyword lists (added mid-2025). Apply your brand negative list to PMax the same way you apply it to Search campaigns. This is a second control layer that works alongside brand entity exclusions. Use both: entity exclusions for broad brand containment, and campaign-level negative keywords for specific terms, modifiers, and variants the entity system misses.

The way to operate them effectively is to treat them as part of a broader control system. After implementation, you’re not checking whether they exist, you’re validating whether they’re working. That shows up in a few places:

- PMax search themes should show fewer brand signals over time

- Brand campaign impression share should recover

- Branded CPCs should stabilize as internal competition decreases

If those signals don’t move, the exclusion isn’t holding.

After enabling exclusions, check PMax → Insights → Search Themes weekly for 4 weeks. Brand-related themes should decline in volume. If they don’t, the entity mapping isn’t covering your variants.

There is no alert when leakage resumes. As new brand variants emerge or Google’s entity mapping lags behind real query behavior, gaps reappear. That’s why exclusions need to be revisited alongside search term analysis and campaign performance, not treated as a one-time configuration.

The goal isn’t perfect coverage. It’s enough containment that PMax stops competing aggressively in brand auctions, allowing the rest of your structure to enforce ownership.

Tightening Match Types on the Dedicated Brand Campaign

Tightening match types on brand campaigns consolidates query ownership by limiting how Google can interpret brand intent. Exact and Phrase create a controlled boundary, preventing semantic expansion from pulling brand traffic into non-brand campaigns.

Most accounts start with some level of Broad Match on brand terms either intentionally or as a legacy holdover.

The problem is that Broad Match doesn’t just capture your core brand query; it expands into adjacent intent, including product categories, modifiers, and even queries that belong in non-brand campaigns.

The shift is straightforward but structural. Instead of allowing Broad Match to interpret brand intent, you explicitly define it:

- Exact match anchors core brand queries (e.g., [brand])

- Phrase match captures controlled variants (e.g., “brand shoes”)

This forces Google to route brand demand through a single, predictable pathway rather than letting multiple campaigns interpret it differently.

The impact shows up quickly:

- Query mapping becomes more consistent

- CPC volatility decreases as internal competition drops

- Brand traffic stops leaking into non-brand campaigns through semantic expansion

Broad Match still has a role, but not here. It belongs in non-brand campaigns where discovery is the goal. On brand, control outperforms coverage.

Validating the Fix: A 3-Step Framework

Fixing brand cannibalization isn’t confirmed by setup; it’s proven through data. Validation follows a sequence: first containment, then auction stability, then financial impact, with each step confirming that internal competition has been removed. If any stage fails, the system is still overlapping.

Step 1: Confirm Brand Containment (Search Terms)

Brand containment is a pass/fail test: brand queries should only exist in one campaign. If they show up anywhere else, you haven’t fixed the problem.

Within the first one to two weeks, search term audits should show clean separation. If brand queries are still appearing outside your dedicated brand campaign, the structure isn’t enforced yet.

This is a binary check:

- Clean separation → move forward

- Any leakage → fix controls

Step 2: Validate Auction Stability (Auction Insights)

Auction stability confirms that internal competition has been eliminated: brand campaign metrics should align with real market dynamics, not internal shifts. Consistent impression share and predictable positioning indicate clean ownership. Ongoing volatility without external drivers signals unresolved overlap.

Before the fix, brand campaigns often show instability that doesn’t align with real competitors:

- Fluctuating impression share

- Inconsistent overlap rates

- Position volatility without market explanation

After isolation, that noise should disappear.

You’re looking for consistency. If performance is still volatile without external cause, internal overlap still exists.

Step 3: Confirm Financial Impact (CPC + Volume)

Financial validation confirms whether structural changes translated into efficiency: branded CPCs should stabilize or decline while maintaining impression share and conversion volume. The relationship between these metrics reveals whether internal competition has been removed or simply redistributed.

Track three metrics together:

- CPC

- Impression share

- Conversion volume

The relationship tells you what changed:

- CPC down + volume stable → overlap removed

- CPC flat/up + competition unchanged → overlap remains

- CPC down + volume down → demand was removed, not just overlap

Watch for false signals:

- False positive: CPC drops but so does impression share. This means you didn’t just remove overlap, you accidentally suppressed brand coverage (likely over-aggressive negatives or a negative keyword conflict).

- False negative: CPC stays flat but PMax performance drops. This could mean the exclusions worked but PMax was propping up overall account metrics by claiming brand credit. The “real” non-brand performance of PMax is now visible, and it’s weaker than it looked.

Don’t evaluate financial impact before 30 days. Automated bidding needs 2-3 weeks to recalibrate after structural changes. Evaluating at day 7 will show instability that isn’t real, it’s the learning period adjusting.

This is where structural fixes translate into margin.

How This Plays Out Over Time

| Timeframe | What You’re Validating | Primary Signal | Success Indicator |

| Week 1-2 | Containment | Search terms | Zero brand queries in non-brand campaigns |

| Week 3-4 | Auction stability | Auction insights | Stable impression share, no internal volatility |

| Day 30 | Financial impact | CPC + volume | CPC stabilizing or declining with steady conversions |

| Day 60 | System consistency | Full account view | Efficiency improves without volume loss |

| Ongoing | Maintenance | Monthly audits | No new brand leakage introduced |

What a Healthy Brand Auction Actually Looks Like

A healthy brand auction has one owner. If multiple campaigns can serve, you don’t have optimization, you have competition. And if you’re competing with yourself, you’re paying more for the same demand and calling it performance.

When the system is working, brand behaves like a controlled asset:

- One campaign consistently owns brand queries

- CPCs move in line with competitor activity, not internal pressure

- Attribution is concentrated and stable

- Incremental spend produces proportional incremental conversions

Nothing feels volatile, because nothing internal is competing.

When it’s not, brand becomes a shared resource:

- Multiple campaigns enter the same auctions

- CPCs rise without external pressure

- Conversions are fragmented across campaigns

- Account-level efficiency stays flat despite strong campaign metrics

From the outside, both look the same. Campaigns hit targets. ROAS looks healthy. Volume holds.

The difference shows up in cost and control.

In a controlled system, you pay for demand once and measure it cleanly. In a fragmented one, you pay for the same demand over and over and the reporting makes it look fine.

That’s the shift. This isn’t optimization, it’s ownership. When brand is controlled, performance becomes predictable. When it isn’t, you’re just bidding against yourself and calling it growth.