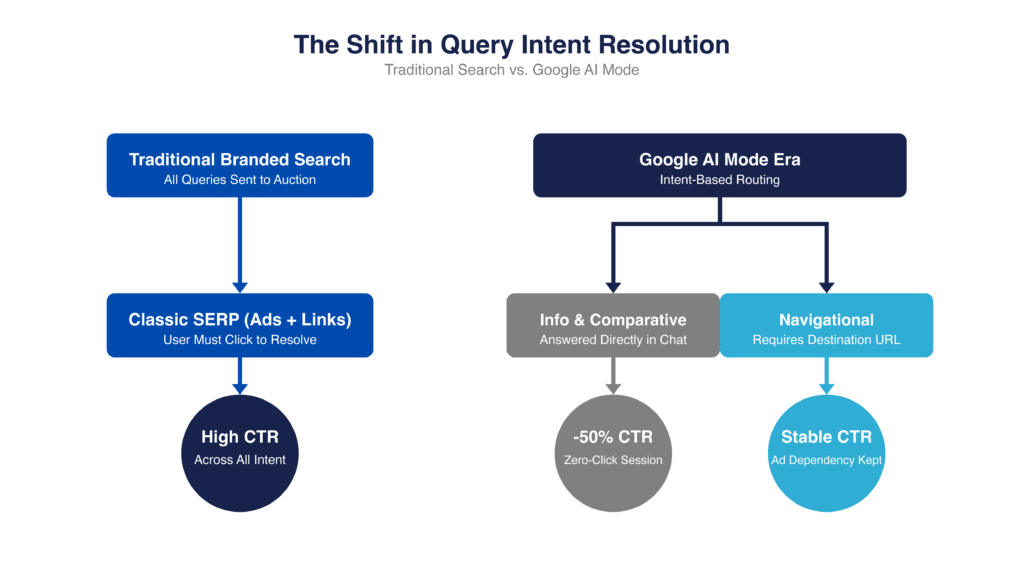

Google AI Mode is reducing the need for clicks on branded search by answering informational and comparative queries directly. This shows up as a subtle CTR decline that hides a deeper split between queries that still convert and those that no longer require engagement. The response isn’t to cut brand spend but to reallocate it toward high-intent queries and the content shaping AI-generated answers.

How AI Mode Actually Works on Branded Queries

Google AI Mode shifts branded search from a results page into a conversational session, where many queries are resolved without a click or a second auction. This shift is driven by intent: informational and comparative queries are increasingly intercepted and answered directly, while navigational queries still require site access. As a result, your brand is no longer defined by your ads, but by the crawlable content AI systems use to construct answers.

Historically, a low click-through-rate (CTR) on a branded query meant weak positioning. Now, it can mean the opposite: the query was answered so effectively that no click was needed. This is the move to a zero-click reality.

On a traditional branded search engine results page (SERP), ads and organic listings compete for attention, and the user chooses where to go. Even with AI Overviews, that structure largely holds; ads remain visible and scrolling behavior still exists.

AI Mode breaks that model. A user may start with a normal query, but for informational or comparative searches, Google introduces an AI-generated response and shifts the interaction into a conversational session. From there, answers are generated and refined through follow-ups. The experience becomes session-based rather than auction-based, meaning the initial query may be the only moment where a traditional keyword auction runs before the interaction moves into the AI response.

Ads do appear inside AI Mode, but differently than classic Search. Google began testing ads in AI Mode in May 2025, expanded them through 2025 and into 2026, with third-party tracking now putting ad presence at roughly a quarter of AI Mode results. These placements surface contextually inside the AI response, pulled from existing Search and Shopping activity via AI Max for Search and broad match, rather than being re-auctioned on every follow-up a user types into the chat. Eligibility currently requires AI Max for Search and/or broad match in at least one Search campaign, so a brand running pure exact-match Search may not be showing up in AI Mode at all, which is worth confirming before you conclude AI Mode is or isn’t capturing your branded demand.

This is where behavior diverges. Queries like “what does [brand] do” or “[brand] vs competitor” can now be resolved directly within AI Mode using synthesized descriptions, pricing context, and third-party comparisons, often without re-triggering new auctions. In contrast, queries like “[brand] login” or “[brand] pricing” still require a destination, so they remain anchored in the traditional SERP where ads capture demand.

The distinction is not just visibility, it’s dependency. AI Overviews still compete with your ad within the page. AI Mode changes whether the page and your ad are needed at all.

For branded campaigns, this creates a structural split: some queries remain reliable, click-driving channels, while others are intercepted and resolved before a click or even a second auction can occur.

Which Branded Queries Trigger AI Mode

AI Mode activation is driven by intent, specifically whether a query can be answered or extended into a conversation instead of requiring a destination. Informational and comparative queries are most likely to trigger AI Mode and lose ad visibility, while navigational queries remain anchored in the traditional SERP.

| Query Type | Example | AI Mode Trigger Likelihood | Ad Visibility |

| Informational | what does [brand] do, how [brand] works | High | Reduced — often answered or expanded into AI Mode |

| Comparative | [brand] vs competitor, [brand] alternatives | High | Reduced — user frequently continues in AI Mode |

| Transactional | [brand] pricing, buy [brand], [brand] demo | Medium | Medium — may stay on SERP or partially engage AI |

| Navigational | [brand] login, [brand].com | Low | High — user remains on traditional SERP |

For informational and comparative queries, users often start on a standard results page but are quickly presented with an AI-generated response and prompted to continue. That pivot point, where a user stops scrolling and starts chatting, is where traditional brand CTR collapses.

For navigational queries, that transition rarely happens. The user has a clear destination, so Google keeps them in the traditional SERP where ads and organic links remain essential.

Transactional queries are the current battleground; they’re in a state of high-flux ‘limbo’ between a click and a chat. Some users stay in the SERP and click through to pricing or demo pages. Others engage with AI-generated summaries before deciding where to go, creating more variability in ad visibility and performance.

The underlying rule is consistent: AI Mode activates when Google can extend the interaction into a multi-step answer rather than sending the user to a specific destination.

That distinction determines not just where your ad appears, but whether the user ever needs it to begin with.

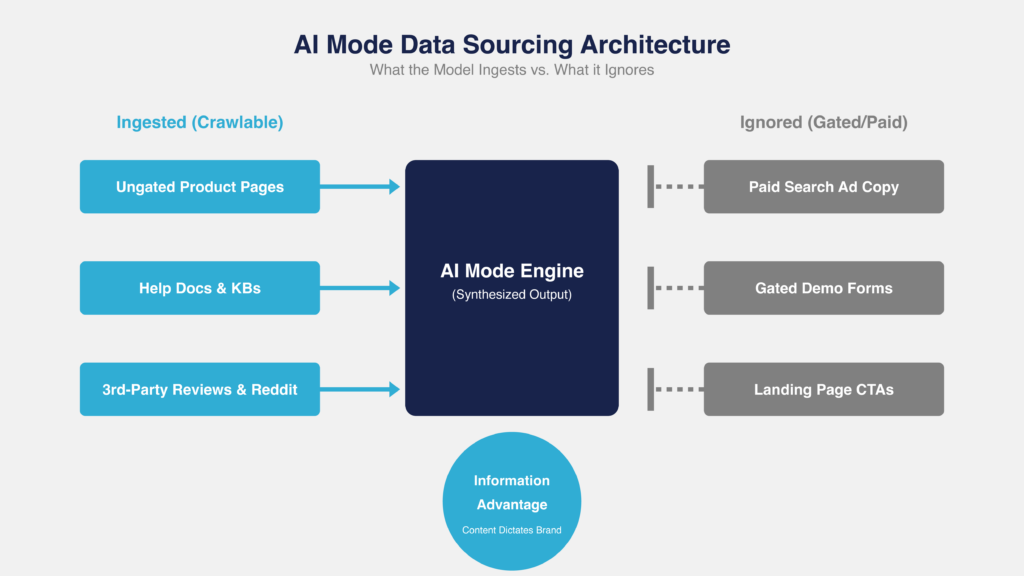

What AI Mode Pulls From (and What It Ignores)

AI Mode builds its answers from crawlable, ungated content, often prioritizing structured sources like help docs, reviews, and third-party content over marketing pages. Paid search inputs like ad copy, CTAs, and gated assets are largely ignored, creating a disconnect between what teams optimize and what users actually see.

In practice, AI Mode pulls from:

- Product and feature pages

- Help docs and knowledge bases

- Third-party review and comparison platforms (G2, which now owns Capterra, Software Advice, and GetApp after its 2025 acquisition, plus vertical-specific review sites where they rank)

- Independent comparison content

- Forum discussions, with Reddit the single heaviest third-party contributor across AI engines (YouTube transcripts and descriptions also cited regularly)

- Google-owned properties (support docs, Maps, YouTube); per SE Ranking’s March 2026 study of 1.3M citations, Google’s own properties alone account for 17.4% of AI Mode citations, more than YouTube, Reddit, Facebook, Amazon, Indeed, and Zillow combined

It does not use:

- Paid search ad copy

- Landing page CTAs

- Gated content (whitepapers, demo forms)

This creates a disconnect between what performance teams optimize and what AI Mode actually uses to represent the brand.

The friction shows up quickly. AI systems tend to skip “marketing speak” and pull from whatever is most structured and explicit, often your help docs, not your homepage. In some cases, that means a support article or an outdated third-party review becomes the source of truth. In worse cases, a years-old Reddit thread surfaces because it’s clear, crawlable, and easy to summarize.

Control mechanisms don’t carry over. Negative keywords can block a competitor in paid search, but they don’t stop AI Mode from mentioning that competitor or their pricing directly in a branded summary. At the same time, high-value comparison pages are often gated or buried, which means they’re excluded entirely from what the model can use.

For the PPC tooling and paid search automation vertical specifically, the sources that consistently surface in AI Mode citations are: r/PPC and r/googleads on Reddit; Search Engine Land, Search Engine Journal, and Search Engine Roundtable on the editorial side; Smarter Ecommerce, Optmyzr, Adalysis, WebFX, and Practical Ecommerce on the industry-blog side; G2’s consolidated review network (G2 plus the Capterra, Software Advice, and GetApp properties it now owns); and the Google Ads Help support domain, which is cited heavily for Performance Max, AI Max, and brand-controls questions. If your content isn’t mapped to how those sources frame your category, AI Mode will default to their framing, not yours.

Your explanatory, crawlable content, not your ads, is now your first impression on a meaningful share of branded queries.

If your content does not clearly answer what the product does, how it works, and how it compares, AI Mode will answer those questions anyway using whatever sources it can access.

Measuring the Real Impact on Your Brand Campaign Metrics

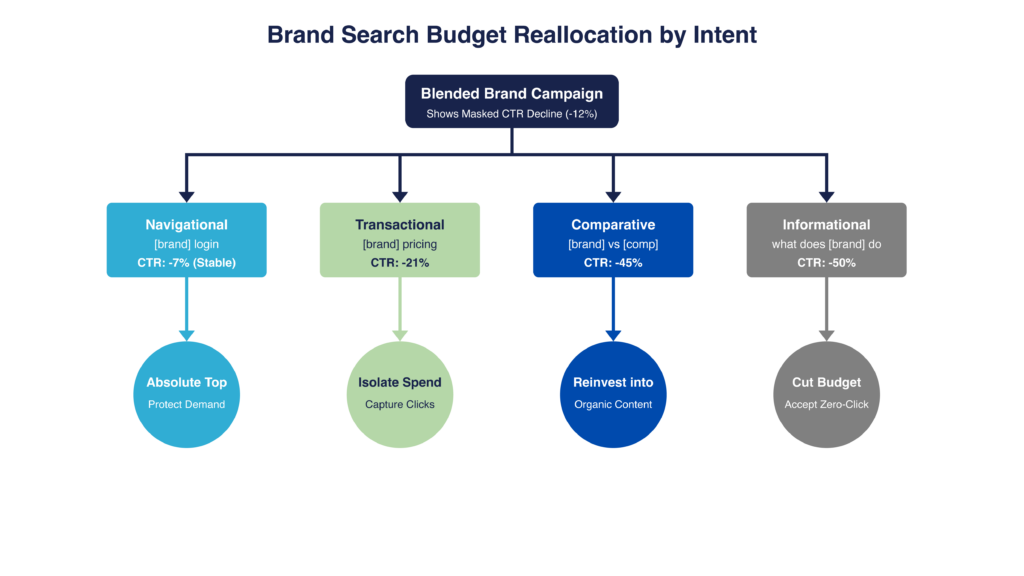

AI Mode impact appears as a subtle CTR decline that masks a deeper shift: clicks are being displaced on informational and comparative queries while navigational demand remains intact. Blended reporting hides this by averaging performance across intent types, making structural change look like minor degradation. The only way to see the real impact is to segment brand queries by intent and identify where clicks are no longer required.

In the account, this doesn’t look like a clear break. It looks like a small, explainable dip. CTR is down 10–15%, cost per click (CPC) is flat, conversions are mostly stable. Nothing obviously wrong.

The issue is how brand is reported. Most campaigns mix all query types into one set of metrics, so the parts that are breaking get averaged with the parts that are still working.

Instead of seeing informational queries collapse while navigational holds steady, you just see a mild decline at the campaign level. That’s what drives bad decisions: broad bid changes, budget cuts, or the assumption that demand is weakening.

When you pull search terms, the pattern is obvious:

- “[brand] login,” “[brand] pricing,” “[brand] demo” → unchanged

- “what does [brand] do,” “[brand] vs competitor” → impressions still there, clicks down

So the decline isn’t broad, it’s concentrated.

You’re still showing up for the same searches, but for part of that demand, the click is no longer needed.

AI Mode isn’t degrading performance, it’s displacing clicks on specific query types. But without segmentation, it all shows up as a generic drop.

So a “-12% CTR decline” is often hiding something more like:

- Informational queries down ~50%

- Comparative queries down materially

- Navigational queries flat

The demand and impressions are still there but for a growing share of queries, the answer happens before the ad ever gets used. And unless you break performance out by intent, you can’t see where your brand spend is still doing real work.

Segmenting Brand Queries by Intent in Search Terms Data

Segmenting branded queries by intent is the only way to expose where AI Mode is displacing clicks, but in practice, it’s a manual and imperfect process due to match type expansion and query aggregation. Instead of aiming for perfect classification, the goal is to bucket queries using modifier patterns and identify where performance is diverging. This turns a blended brand campaign into distinct intent segments, making hidden inefficiencies visible and actionable.

Start by exporting your branded search terms from Google Ads.

From there, group queries based on the modifiers they contain. Don’t get paralyzed trying to categorize every single long-tail variant. You’re building buckets to stop the bleeding, not a perfect taxonomy.

| Navigational | Informational | Comparative | Transactional |

| login | what is | vs | pricing |

| sign in | what does | versus | cost |

| dashboard | how does | alternative | buy |

| portal | how to use | alternatives | demo |

| homepage | features | competitors | free trial |

| website | benefits | compare | trial |

| official site | use cases | comparison | sign up |

| support | examples | better than | subscription |

| help center | guide | similar to | plans |

| contact | tutorial | replacement for | quote |

| phone number | overview | top tools like | discount |

| customer service | explanation | reviews vs | coupon |

| app | review | competitors list | pros and cons |

| get started | download |

In practice, this is messy.

Exact match isn’t truly exact anymore. Close variants and query expansion will blur intent. Google Ads Help itself states there is no way to opt out of close variant matching on any keyword type, so this bleed is structural, not a setting you missed. A query like “[brand] pricing vs competitor” might get bucketed unpredictably, and broad match will continuously reintroduce mixed intent into your data.

That’s why most teams end up doing this manually:

- Tagging queries in Sheets for initial analysis

- Using regex or n-gram scripts (e.g., flagging any query containing “how,” “what,” or “vs”) to identify patterns at scale

You’re not classifying perfectly, you’re identifying where the bleed is happening.

Tracking CTR Trends by Segment Over Time

Segment-level CTR trends reveal where AI Mode is reducing engagement, typically showing declines in informational and comparative queries while navigational queries remain stable, often alongside a widening gap between impressions and clicks.

There’s no clean “AI Mode start date,” so the goal is to identify when your data starts diverging.

Pull ~6 months of data and trend CTR monthly by intent segment. You’re looking for an inflection point where:

- Informational CTR starts to drop

- Comparative follows

- Navigational holds steady

That divergence is the signal.

At the same time, watch the impression–click relationship. If impressions stay flat (or grow) while clicks decline, you’re still entering the same auctions but fewer users need to click. That’s AI Mode (or AI Overviews) absorbing the interaction.

Hypothetical Trend Example (illustrative, not measured)

| Query Segment | CTR (Before) | CTR (After) | Change |

| Informational | 18% | 9% | -50% |

| Comparative | 22% | 12% | -45% |

| Transactional | 28% | 22% | -21% |

| Navigational | 45% | 42% | -7% |

In parallel, you’ll typically see impressions hold steady while clicks decline, compressing CTR. A sustained ~15% widening in that impression–click gap is a strong indicator this is structural, not noise.

Where teams get misled is measurement. Smart bidding can mask CTR declines by maintaining conversion volume, attribution models under-credit the informational clicks that are disappearing, and Performance Max (PMax) still doesn’t let you tie queries to specific assets, asset groups, or bid decisions, even with the 2025 transparency upgrades that carried into 2026 (Search Terms Insights surfaced as a default report column, channel performance reporting across Google’s full inventory, expanded Search Partner placement reporting, and campaign-level negative keywords). You can see the queries and the aggregate spend; you cannot segment PMax search terms by intent inside the UI the way you can in a Search campaign, and you cannot apply intent-level bid adjustments. The practical workaround is to export PMax Search Terms Insights, tag them by intent modifier in Sheets alongside your Search data, and use campaign-level negative keywords to starve the informational bucket if you can’t separate campaigns. The result, without this work, is “soft performance” with no clear cause.

The goal isn’t to pinpoint when AI Mode started but to recognize when impressions, clicks, and CTR stop moving together across intent segments.

Reallocating Brand Budget by Intent Segment

The correct response to AI Mode is not to reduce brand spend, but to reallocate it away from informational queries where clicks are disappearing and toward high-intent queries that still convert. This shift turns brand from a blended campaign into a capital allocation strategy across intent segments. The remaining budget should be reinvested into content that shapes how your brand is represented in AI-generated answers.

Once you’ve segmented performance by intent, the inefficiency becomes obvious:

- You’re overpaying for clicks that no longer happen

- And under-investing in queries that still convert

This is a capital allocation problem, not a bidding tweak.

The shift requires three coordinated moves:

- Defend high-intent demand

- Reduce wasted spend

- Reinvest into answer-layer influence

Defending Navigational and Transactional Brand Queries

Navigational and transactional brand queries must be protected as guaranteed demand capture, with the priority shifting from efficiency to absolute visibility. This requires isolating these queries, bidding for absolute top impression share, and preventing lower-intent traffic from distorting budget and signals. The risk is not overspending, it’s losing access to high-intent users who still need to click.

These queries (“[brand] login,” “[brand] pricing,” “[brand] demo”) are where AI Mode has the least impact. The risk here isn’t wasted spend, it’s losing access to demand that still converts.

Isolate high-intent brand queries into a controlled environment, protect their visibility, and prevent lower-intent traffic from re-entering the campaign.

1. Break these queries out structurally

Pull core navigational and transactional terms into their own campaign:

- “[brand] login”

- “[brand] pricing”

- “[brand] demo”

Then enforce that boundary. Add negatives for informational and comparative modifiers so these queries don’t re-enter through match type expansion. The goal is to keep this campaign strictly high-intent so budget and bidding signals stay clean.

2. Bid for absolute top, not average position

Monitor:

- Impression Share (Absolute Top)

- Impression share lost to rank

With AI Overviews and AI Mode pushing traditional results down, “top of page” is no longer enough. On mobile, you can be technically “top” and still sit below the fold. If you’re not consistently in the absolute top position, you risk losing visibility entirely.

3. Actively monitor competitor pressure

Use Auction Insights on these exact terms and watch for:

- Rising overlap rate

- Drops in outranking share

As performance weakens elsewhere, competitors often push into brand. Even small shifts here can impact conversion volume.

4. Audit the AI Max for Search layer

AI Max for Search is the mechanism Google is using to expand brand query matching into AI-driven experiences in 2026, and brand inclusions are flowing through it — Google began upgrading brand inclusions into AI Max on May 27, 2025, and in September 2025 auto-migrated Dynamic Search Ads, automatically created assets, and campaign-level broad match settings into AI Max as well; new DSA creation was discontinued at that point. That matters for this campaign in two directions. First, if AI Max is enabled on your defensive brand campaign without a tight brand inclusion list, informational and comparative expansions can quietly creep back into a campaign you thought was strictly navigational/transactional. Second, if AI Max is entirely off, your brand may not be eligible to surface in AI Mode ad placements at all. The fix is to audit whether AI Max is on per campaign, confirm brand inclusion lists are scoped to actual brand and navigational/transactional modifiers, and re-check search terms after any AI Max toggle. Match type expansion is the mechanism that historically undoes strict segmentation, and AI Max is the latest version of it.

This structure often breaks when segmentation isn’t fully enforced. Smart bidding will continue optimizing across mixed intent if campaigns aren’t properly separated, while Broad match can quietly reintroduce informational queries and undo the split.

At the same time, internal pressure to reduce “brand spend” can lead to cuts in the exact queries that still drive conversions. The fix is to maintain strict campaign separation, constrain match types, audit search terms regularly, and track absolute top impression share so high-intent coverage doesn’t quietly erode below the fold.

Reducing Waste on Informational Brand Queries

Informational brand queries should be treated as optional spend, with costs reduced or eliminated where clicks are no longer happening. As CTR declines, effective cost per visit rises, turning these queries into a source of hidden inefficiency. The goal is to selectively retain influence while aggressively cutting spend that no longer contributes to traffic or pipeline.

When CTR declines, you’re still entering the same auctions but generating fewer clicks. That means you’re effectively paying more per visit, even if CPC appears stable. Left unchecked, this segment absorbs budget without contributing proportional traffic or pipeline.

1. Separate informational queries into their own controlled segment

Informational queries should not sit in the same campaign as navigational or transactional terms. They require different bid logic, different thresholds, and more aggressive pruning. Separation ensures cost adjustments here don’t impact high-intent coverage.

2. Adjust bids to reflect reduced engagement

Lower bids on clusters where CTR has materially declined. The goal is not to recover volume but to align cost with the reduced likelihood of a click.

3. Identify and act on non-contributing query clusters

Look for clusters with:

- High impressions

- Low CTR

- No downstream engagement

These are typically “what is,” “how does,” or “examples” queries that are now being answered directly in the SERP or AI Mode.

From there, apply a simple decision framework:

- Keep: Queries that still drive product engagement or show assisted conversion value

- Reduce: Queries with some engagement but declining CTR

- Pause: Queries with no measurable impact on traffic or pipeline

Measurement here is imperfect. Google Analytics 4 (GA4) often under-credits early-stage influence, and customer relationship management (CRM) or offline conversion data can lag. That creates a real risk of cutting queries that still matter.

To manage that risk, evaluate performance over a longer time horizon, factor in assisted signals where possible, and reduce bids before pausing outright so you control cost without prematurely cutting off demand creation.

Investing in Content That Shapes AI Mode Answers

As AI Mode absorbs informational demand, budget should shift from buying clicks to shaping the answers users see. This requires investing in clear, crawlable, and comparison-driven content that AI systems can easily extract and synthesize. The objective is no longer just to rank or convert, but to become the source AI uses to define your brand.

Start by building content that explains, not just converts. Pages like “[brand] vs [competitor],” “[brand] alternatives,” and detailed feature breakdowns should be comprehensive, ungated, and written for clarity.

These aren’t just SEO assets; they’re source material for how your brand gets described in AI-generated answers.

Structure matters as much as substance. Content needs to be easy to extract and interpret, which means using clear question-and-answer formats, well-defined sections, and straightforward language.

FAQ-style pages and direct explanations tend to perform better here than traditional marketing copy because they map cleanly to how AI systems summarize information.

AI Mode frequently pulls from third-party sources, so review platforms (G2 and its acquired properties Capterra, Software Advice, and GetApp, plus vertical-specific review sites where they rank), along with independent comparison articles and reviews, shape how your brand gets represented. Outdated positioning or inconsistent messaging in those sources will get surfaced at scale.

Finally, the technical layer needs to support all of this. Content should be fully crawlable, not gated behind forms, and the structure itself (clear question-shaped H2s and H3s, direct 1–3 sentence answer leads, well-defined sections) should do the work of making content easy to extract. A note on markup: FAQPage schema used to earn rich results for most sites, but since Google’s August 2023 restriction, FAQ rich results only appear for authoritative government and health sites. FAQ schema may still help LLMs parse Q&A content, but don’t count on it for SERP real estate.

A note on llms.txt, since it keeps coming up: it was proposed as a way for site owners to signal AI-friendly content to LLM crawlers, but as of 2026 none of the major AI systems (Google, OpenAI, Anthropic, Perplexity) have confirmed support, and independent log analyses show their crawlers aren’t meaningfully reading it. Treat it as optional housekeeping, not a dependency. What actually moves the needle is clean, crawlable, well-structured content, the same fundamentals that feed standard search.

Building an AI Mode Monitoring Dashboard

AI Mode impact must be monitored through divergence, not aggregates, tracking CTR gaps by intent, visibility loss, and impression-to-click trends. A simple weekly dashboard can surface these shifts early, before they impact spend or coverage.

You can build this in an afternoon.

Start by exporting branded search terms, tagging them by intent (navigational, informational, comparative, transactional), and tracking performance weekly in a simple Sheet or Looker Studio view. From there, everything comes down to watching how those segments move relative to each other.

The first place to look is CTR by intent. Navigational should stay stable. Informational and comparative will start to drift down. To make that visible, track the gap between them:

→ Efficiency Gap = CTR (Navigational) – CTR (Informational)

If that gap is widening, something is absorbing clicks upstream.

Next, check whether you’re still visible when it matters. Pull:

- Absolute top impression share

- Search lost impression share (rank)

If search lost impression share (rank) is rising on informational queries, it’s not just bidding, it’s often that AI-generated results are pushing your ads out of the visible area.

You’re still entering auctions, but users aren’t seeing you.

Then sanity-check navigational coverage using Auction Insights. If outranking share or overlap shifts on “[brand] pricing” or “[brand] login,” that’s immediate risk. This is still where conversions happen.

Finally, validate what users actually see. Once a month, search your top brand queries in AI Mode and note:

- What sources are cited

- How your brand is described

- Which competitors appear

No platform reports this, so you have to check AI Mode manually.

What you’re watching for is simple:

- Informational CTR down, navigational flat

- Impressions steady, clicks declining

- Visibility (Abs. Top %) eroding

As a rule of thumb, a ~10% CTR drop in a segment or a ~15% widening between impressions and clicks is usually not noise, it’s behavior changing.

This won’t reconcile perfectly across Google Ads and GA4, and Performance Max will still obscure query-to-asset mapping even with the 2025–2026 transparency upgrades. That’s fine. The goal isn’t perfect attribution, it’s directional clarity.

Run this weekly, not monthly. If you wait for clean reporting, you’ll catch the shift after the budget has already moved.

This isn’t about building a perfect dashboard; it’s about building a consistent one. The signals won’t be clean, attribution won’t line up, and parts of the system (especially PMax’s query-to-asset blind spot) will remain partially opaque. What matters is tracking the same cuts of data over time so divergence becomes obvious before it becomes expensive.

Teams that treat this as a weekly operating rhythm will see the shift early and adjust. Teams that rely on aggregated reporting or quarterly reviews will only see it after performance has already moved. AI Mode doesn’t create sudden breaks, it creates slow misalignment between spend and where clicks actually still exist.