Manual Google Ads audits are slow, fragmented, and routinely miss the patterns that actually drive wasted spend. Claude changes that by ingesting full account exports in a single session, surfacing cross-dimensional inefficiencies, and generating scripts that turn findings into ongoing monitoring. The result is a shift from periodic, reactive audits to a continuous system that identifies, diagnoses, and prevents performance degradation.

What Makes Claude Different From Other AI Tools for Pay-per-Click (PPC) Audits

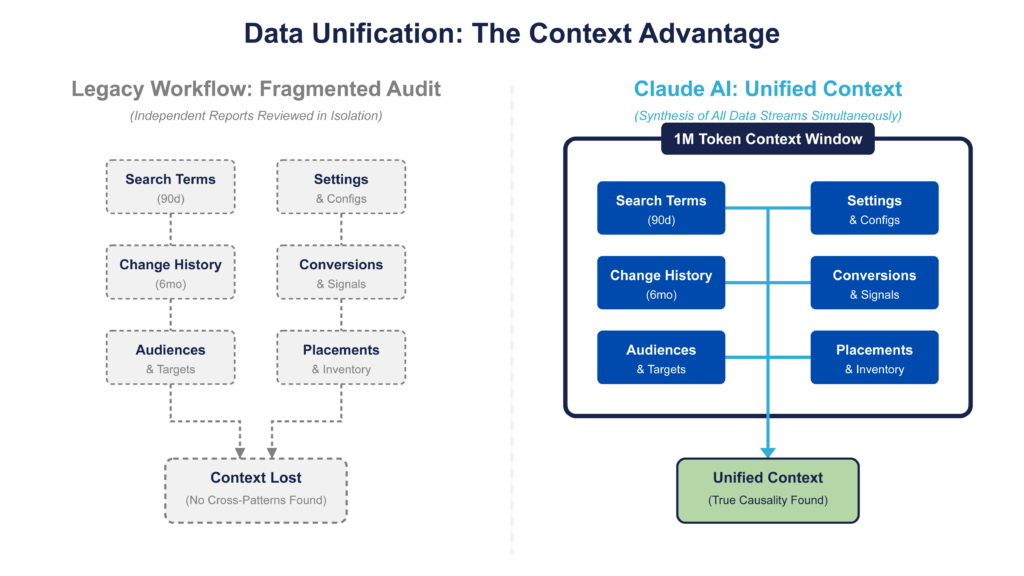

Claude’s advantage is its ability to analyze full, disconnected Google Ads datasets together, eliminating fragmentation and surfacing cross-dimensional patterns that isolated reports miss. Its large context window allows search terms, settings, and change history to be evaluated in a single pass, preserving relationships that break in chunked workflows.

Most audits don’t fail on strategy; they fail on data fragmentation. Once you pull 90 days of search terms, change history, and conversion data, you’re dealing with datasets that are too large and disconnected to analyze cleanly in one pass.

Claude breaks the bottleneck that keeps most audits surface-level.

Claude Opus 4.7 (released April 2026) and Sonnet 4.6 support a 1M token context window, roughly 700K words. (Older models like Sonnet 4.5 still support 200K tokens.) With the 1M window, you can upload:

- Full search term reports (90 days, all campaigns)

- Campaign and account settings

- Six months of change history

- Placement and audience data

…and analyze them together in a single session.

Note: Model specifications current as of April 2026. Check Anthropic’s documentation for the latest.

To put that in practical terms: a 90-day search term report for a mid-size account ($100K–$500K/month spend) typically runs 50K–200K rows. At roughly 10 tokens per row with headers, that’s 500K–2M tokens. Most mid-market accounts fit comfortably in a single 1M-token session. Enterprise accounts with millions of rows will still need pre-filtering or segmentation before upload.

If you’re running multiple audit passes, Claude’s Projects feature lets you upload your datasets once and reference them across multiple conversations without re-uploading each time. Per-file limit is 30MB. For audit workflows, export as CSV; Claude handles it natively. XLSX requires code execution to be enabled.

Most performance issues in Google Ads are cross-dimensional:

- Cost-per-click (CPC) spikes tied to specific changes

- Conversion rate drops tied to audience expansion

- Waste driven by query patterns interacting with match types

These don’t show up when reports are reviewed in isolation.

Other workflows require splitting datasets and running multiple prompts, which breaks pattern recognition. Claude keeps the full dataset in context, so analysis stays consistent and connected.

Where This Shows Up Practically in Google Ads

Claude’s advantage shows up where Google Ads is hardest to analyze: search terms, change history, and monitoring. It turns fragmented data into patterns, causality, and enforceable controls, shifting workflows from manual inspection to systematic analysis.

1. Full-Scale Search Term Analysis

Claude finds wasted spend in search terms by grouping similar queries together, instead of reviewing them one by one. This makes it easier to spot patterns driving poor performance even in the portion of search data that Google doesn’t fully show.

Instead of reviewing queries one by one, Claude groups them into patterns (e.g., “free,” “jobs,” “DIY”). But the real value is how this works under limited query visibility.

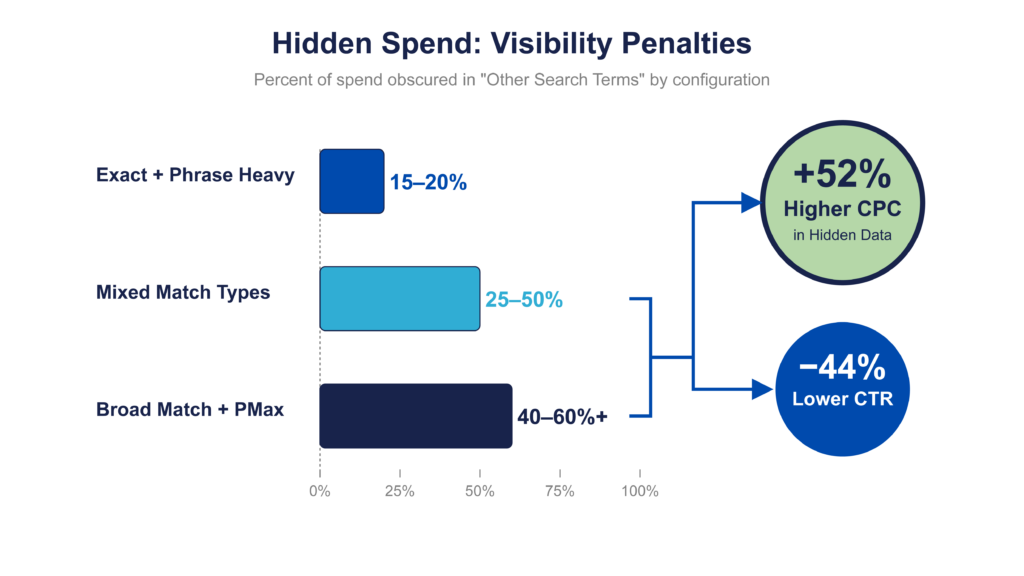

Since 2020, Google has hidden a significant share of queries, routinely pushing 25–50% or more of spend into “Other search terms.” Some accounts see over 70% hidden. Independent analysis of $20M+ in Google Ads spend found that hidden terms drove 52% higher CPCs and 44% lower click-through rates compared to visible queries. The problem is worse in accounts running heavy Broad Match with Performance Max. If you’re auditing an account that’s mostly Exact and Phrase match, you might see 15–20% hidden. All Broad with PMax? Expect 40–60%+. The match type mix determines how much of your data you’re actually analyzing.

Claude doesn’t recover that data. It helps you infer what’s inside it. If visible queries containing “free” drive spend with zero conversions, that pattern likely extends into the hidden portion. Claude quantifies that pattern across the dataset, giving you enough confidence to apply negatives at the pattern level, not just the query level.

This is how you control waste when you can’t see the full query set.

2. Change History Root Cause Analysis

Claude helps you understand why performance changed by connecting results to groups of account updates, instead of reviewing logs line by line. It makes messy, overlapping changes easier to interpret by showing which updates are most likely driving the outcome.

Change history is difficult to interpret because multiple edits (bids, budgets, targeting) often happen within the same window, alongside system-generated changes. Claude groups these into logical clusters and aligns them with performance movement, helping identify which changes likely drove the outcome.

You get a clearer view of causality, even when changes overlap and the log is noisy.

3. Script Generation for Monitoring

Claude turns audit findings into automated checks that run inside Google Ads and alert you when problems come back. Instead of fixing issues once and revisiting them later, scripts monitor continuously so you catch regressions early instead of reacting after performance drops.

Most issues don’t stay fixed; query mix shifts, bidding adapts, and inefficiencies reappear. Scripts turn those one-time findings into repeatable checks that run on a schedule and flag problems early.

This moves you from reactive to proactive, catching regressions before they compound. (Detailed examples and deployment are covered later.)

Preparing Your Google Ads Data for a Claude Audit

Claude’s output is only as good as the data you give it, so clean, complete exports are critical for an accurate audit. The goal is to give Claude full visibility into how the account actually operates so its analysis reflects reality, not gaps in the data.

LLMs are master storytellers; if you feed them fragmented data, they will confidently narrate a fiction about why your cost per acquisition (CPA) is spiking. Garbage in, hallucinated insights out. If inputs are incomplete, misaligned, or missing key dimensions, Claude will still return answers, but they won’t reflect how the account actually behaves.

The goal is to give Claude the same visibility a senior operator would need to diagnose performance issues, just without the time constraint.

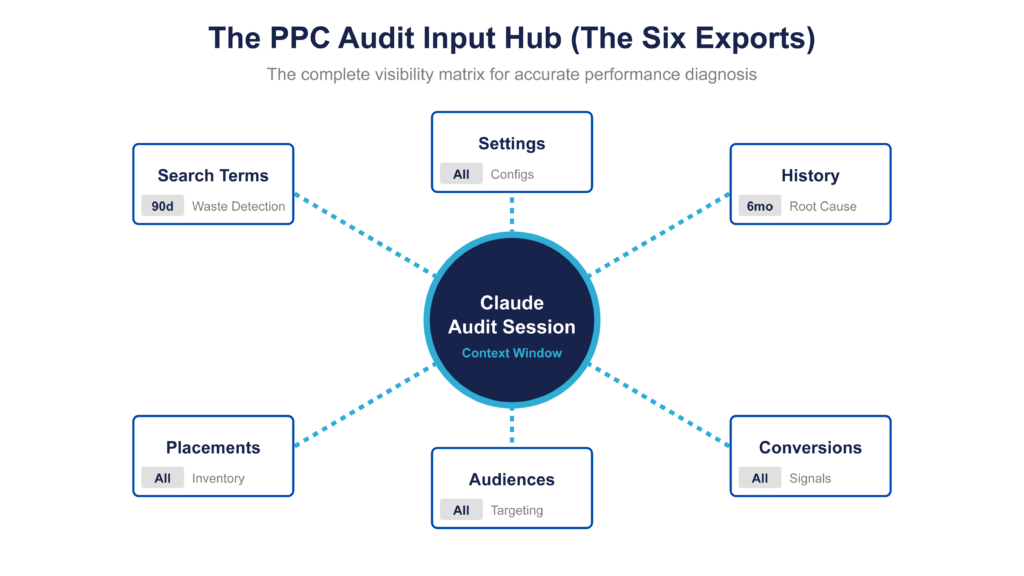

The Six Exports That Cover 90% of Audit Surface Area

A complete Claude audit depends on six core exports: search terms, campaign settings, change history, conversion actions, audience segments, and placements. Together, these show how spend is generated, influenced, and measured. Missing or misconfigured data in any one of these creates blind spots that limit what Claude can accurately diagnose.

Here’s how to pull each one inside Google Ads:

1. Search Terms Report (Last 90 Days — All Campaigns)

Role: Waste detection

This is your primary weapon for identifying “waste creep,” the low-intent queries that Smart Bidding over-optimizes for in the absence of hard negatives.

Pull the report from Campaigns → Keywords → Search Terms with:

- Last 90 days

- All campaigns [Search and Performance Max (PMax) where available]

Include:

- Search term

- Campaign

- Ad group

- Match type

- Cost

- Conversions

- Conversion value

Before exporting, add Segment → Day. This ensures the data includes time granularity, which is required to align query behavior with performance changes. Also add conversion value manually if it’s not in the default column set; the default view doesn’t always include it, and Claude needs it to calculate return on ad spend (ROAS).

Constraint: Google does not show all queries, with a meaningful share of spend hidden in “Other search terms.” This makes the dataset incomplete but still directionally useful. The issue is amplified in Performance Max, where query visibility is limited and not cleanly exportable from the UI. If you’re pulling PMax search terms from the standard report, expect a near-empty or heavily filtered result. The Insights tab inside PMax campaigns provides Search Categories that are more useful for directional analysis. Most teams also rely on scripts or parallel Standard Shopping campaigns to recover usable data; otherwise, Claude is analyzing a filtered subset of actual query behavior.

2. Campaign Settings Export (All Campaigns)

Role: Misconfiguration detection

This export shows how campaigns are configured, which determines how traffic is being acquired and controlled.

Pull the report from Campaigns → Settings using the export function.

Include:

- Networks (Search/Display enabled)

- Bidding strategy

- Budget

- Location targeting

- URL expansion (for PMax)

If the account uses AI Max for Search, check whether auto-generated assets (headlines, descriptions) and URL expansion are enabled. AI Max combines search term matching, text customization, and final URL expansion into a single suite. It can send traffic to landing pages you didn’t explicitly choose and generate ad copy you didn’t write. As of 2026, Google is auto-migrating Dynamic Search Ads and automatically created assets into AI Max, so even accounts that didn’t opt in may have it active. This changes the audit surface area: you’re no longer just reviewing what the advertiser configured, you’re reviewing what AI Max decided to do on their behalf.

Reviewing this data makes it possible to identify mismatches between intended strategy and actual configuration.

Constraint: Some settings like auto-applied recommendations do not appear clearly in exports. These can introduce changes (e.g., keywords, bids, targeting) without being visible here, so they must be validated directly in the Google Ads UI under Recommendations → Auto-apply settings. Additionally, Google Ads experiments now auto-apply winning variants by default as of April 2026. This means experiment results can be pushed live without manual review. Check experiment settings directly in the UI to confirm whether auto-apply is enabled.

3. Change History (Last 6 Months)

Role: Root cause analysis

This report shows what changes were made in the account and is used to explain performance shifts.

Pull the report from Campaigns → Change History with:

- Last 6 months

- All changes (no filters)

This dataset allows you to connect performance movement to specific account actions.

Be aware that change history exports can be enormous. System-generated entries (automated bidding adjustments, auto-applied recommendations, AI Max expansions) often outnumber human changes 10:1. If you upload the raw export without filtering, Claude will spend tokens parsing noise. Pre-filter to “Made by: User” if you want to isolate human-initiated changes, or keep the full set if you want Claude to identify which system-generated changes correlated with performance shifts. Either way, tell Claude which filter you applied so it interprets the data correctly.

Constraint: Change history is noisy and fragmented. Bulk edits create dense clusters, timestamps don’t always align cleanly, and multiple changes overlap within the same window. The bigger issue is auto-applied recommendations, where Google can quietly introduce changes (e.g., Broad Match expansion, Display expansion) without clear visibility. This turns root cause analysis into a forensic problem. Claude’s role is to identify whether a system-driven “optimization” actually caused the performance shift.

4. Conversion Actions List

Role: Signal integrity audit

This export shows what signals Smart Bidding is actually optimizing toward.

Pull the report from Goals → Conversions and export all conversion actions. (In some Google Ads UI versions, this may appear under Tools → Measurement → Conversions. Verify the path in your account.)

Include:

- Action name

- Primary vs Secondary status

- Attribution model

- Conversion window

- Source (website, import, offline)

Reviewing this list makes it possible to verify whether the account is optimizing toward real business outcomes.

The most common real-world failure here is overlapping tracking setups: a Google Ads conversion tag, a GA4 event import, and Enhanced Conversions all firing on the same purchase event. When this happens, the same conversion gets counted two or three times. Smart Bidding then optimizes toward inflated volume at the expense of actual ROAS, because it sees three “purchases” where one occurred. Claude should be prompted to specifically look for overlapping sources and count methods across conversion actions. If you see multiple actions with the same category (e.g., two “Purchase” actions from different sources both marked Primary), that’s the signal.

Constraint: Micro-conversions (e.g., page views, add-to-cart) are often set as Primary. When this happens, Smart Bidding prioritizes these high-volume actions over purchases or qualified leads, distorting performance, so this export should be used to identify and reclassify these actions as Secondary or Observe-only, reserving Primary status for revenue-driving conversions.

5. Audience Segment Report (All Campaigns)

Role: Hidden targeting expansion

This report shows where spend is actually being allocated across audience segments.

Pull the report from Audiences and export all segments across campaigns.

Include:

- Impressions

- Cost

- Conversions

This data helps identify which audiences are driving spend and whether they align with the intended targeting strategy.

Constraint: Features like optimized targeting and audience expansion can introduce segments that were never explicitly added, allowing spend to flow into audiences outside the intended strategy, so this report should be used to identify high-spend, low-performing segments and either exclude them or tighten targeting controls. Note that optimized targeting applies to Display, Demand Gen, and Video action campaigns. It does not apply to Search campaigns.

6. Placement Report (PMax & Display)

Role: Inventory quality control

This report shows where ads are actually being served across placements.

Pull the report from Campaigns → Insights and reports → When and where ads showed, then select the “Where ads showed” tab for Display and Performance Max campaigns.

Include:

- Placement URL/app

- Impressions

- Cost

- Conversions

This data helps identify low-quality inventory and wasted spend across placements.

As of early 2026, Google began showing PMax placement data in this report for the first time. Previously, the report existed but returned no data for PMax campaigns. It now shows placements, networks, and impressions, though cost is reported as a single aggregate line for Search Partner Network, not distributed across individual placements.

Constraint: Placement visibility in PMax is partial. The report provides a filtered view, not full inventory coverage, so you are auditing a subset rather than the complete distribution.

Formatting Tips That Improve Claude’s Analysis

Claude performs best when datasets are clean, aligned, and complete, using standard CSV exports with consistent headers, full cost and conversion columns, and synchronized date ranges across files. Uploading all datasets together enables cross-referencing, which is where most of the audit value is created.

Key practices:

- Keep Google’s default headers: Avoid renaming columns unless necessary. Claude interprets standard field names more reliably.

- Include full performance metrics: To allow Claude to calculate derived metrics like CPA and ROAS, at minimum:

- Cost

- Conversions

- Conversion value

- Align date ranges intentionally: Mismatched windows make causal analysis less reliable.

- Search terms: 90 days

- Change history: 6 months

- Upload all files in one session: This is critical. Claude’s advantage comes from cross-referencing query → conversion → change → setting. Splitting uploads breaks that chain.

- Redact sensitive data: Remove:

- Client names

- Account IDs

- Proprietary naming conventions

- before uploading to any LLM environment.

Five Audit Prompts That Surface Real Waste

The fastest way to turn Claude into a usable audit engine is through five structured prompts: N-gram waste analysis, settings compliance, conversion hierarchy review, change history root cause analysis, and audience audit. Each prompt forces Claude to produce ranked, structured outputs that expose inefficiencies manual reviews rarely catch, while translating directly into actions inside Google Ads.

This is where the workflow shifts from analysis to execution. The prompts below are designed to be copy-pasteable, assuming you’ve already uploaded the six datasets in the previous step.

Prompt 1 — N-Gram Waste Analysis

What it does: Identifies repeated word patterns across search terms that consume spend without producing conversions.

Prompt (copy/paste):

Analyze the uploaded search terms dataset.

- Break all search terms into bi-grams and tri-grams.

- Aggregate total cost, clicks, conversions, and conversion value for each N-gram.

- Identify N-grams with:

- Total cost > $500

- Zero conversions

- Rank these N-grams by total spend descending.

- For each flagged N-gram, suggest negative keyword additions (phrase or exact match).

Output format:

- Table with columns: N-gram, Total Cost, Clicks, Conversions, Suggested Negative Keyword

- Summary of top 10 wasted patterns and total wasted spend

Expected output: A ranked table of wasted word patterns (e.g., “free,” “jobs,” “DIY”) with clear negative keyword recommendations.

Operational nuance: Broad match expansion often introduces these patterns silently. This prompt surfaces them at scale without relying on manual filtering.

Prompt 2 — Campaign Settings Compliance Check

What it does: Flags configuration issues that create inefficiency or misalignment with account structure.

Prompt (copy/paste):

Analyze the campaign settings dataset.

Identify the following issues:

- Campaigns with Display Network enabled alongside Search

- Brand campaigns using broad match keywords

- Campaigns missing key extensions (sitelinks, callouts, structured snippets)

- Campaigns using default conversion windows

- Budget-limited campaigns with high impression share loss

For each issue:

- List affected campaigns

- Estimate potential impact (low, medium, high) based on spend and conversions

- Provide recommended corrective action

Output format:

- Group findings by issue type

- Include campaign name, issue, estimated impact, recommended fix

- Provide a prioritized action list at the end

Expected output: A prioritized list of misconfigurations tied to specific campaigns, with clear fixes.

Platform friction: Some settings (like auto-applied recommendations and experiment auto-apply) won’t show here. Those require separate validation in the Google Ads UI.

Prompt 3 — Conversion Action Hierarchy Review

What it does: Audits conversion tracking to ensure Smart Bidding is optimizing toward revenue-driving actions, not distorted signals. In many accounts, overlapping tracking setups (Google Ads tag, GA4, offline imports, Enhanced Conversions) create duplicate or inflated signals. When low-intent actions or duplicated purchases are marked as Primary, spend shifts toward cheap, misleading conversions that inflate volume while degrading actual business outcomes.

Prompt (copy/paste):

Analyze the conversion actions dataset.

- Identify duplicate or overlapping conversion actions, especially multiple actions tracking the same event from different sources (e.g., a Google Ads tag purchase and a GA4 import purchase both set to Primary)

- Flag micro-conversions marked as Primary (e.g., page views, add to cart)

- Identify missing offline conversion imports if applicable

- Review attribution models and conversion windows for inconsistencies

For each conversion action:

- Recommend classification: Primary, Secondary, or Observe-only

- Provide reasoning based on business impact

Output format:

- Table with columns: Conversion Action, Source, Current Status, Recommended Status, Reason

- Summary of key risks impacting bidding optimization

Expected output: A restructured conversion hierarchy aligned to actual revenue outcomes.

Prompt 4 — Change History Root Cause Analysis

What it does: Connects performance changes to actual account modifications.

Prompt (copy/paste):

Analyze the change history and performance data together.

- Identify significant performance inflection points (spend, CPC, conversions, CPA)

- Cross-reference these with change history events within a 3–7 day window

- Rank changes by likelihood of causing the performance shift

Output format:

- Timeline of performance metrics with annotated change events

- List of suspected causal changes ranked by confidence (high, medium, low)

- Brief explanation of why each change likely impacted performance

Expected output: A timeline showing “what changed” alongside “what happened,” with ranked causal hypotheses.

Platform limitation: Overlapping changes reduce certainty. Claude can rank likelihood, but not prove causality definitively.

Prompt 5 — Hidden Audience Segment Audit

What it does: Surfaces unintended targeting expansion and underperforming audience segments.

Prompt (copy/paste):

Analyze the audience segment dataset.

- Identify audience segments with:

- High spend and low or zero conversions

- Performance significantly below account average

- Detect signs of unintended targeting expansion (optimized targeting, auto-applied audiences)

- Flag segments that may not align with campaign intent

Output format:

- Table with columns: Audience Segment, Spend, Conversions, CPA, Recommendation

- Categorize recommendations: Remove, Observe, Refine targeting

- Summary of potential wasted spend from audience misalignment

Expected output: A list of audience segments to remove, refine, or monitor.

Operational nuance: Audience expansion in Performance Max and Display can introduce segments without explicit input. Optimized targeting applies to Display, Demand Gen, and Video action campaigns, not Search. This prompt helps surface those hidden layers.

These five prompts standardize what is typically an inconsistent, manual audit process into a repeatable system. More importantly, they force structured outputs that map directly to in-platform actions (negative keywords, settings changes, conversion reclassification, and targeting adjustments), closing the gap between analysis and execution.

From One-Time Audits to Continuous Systems

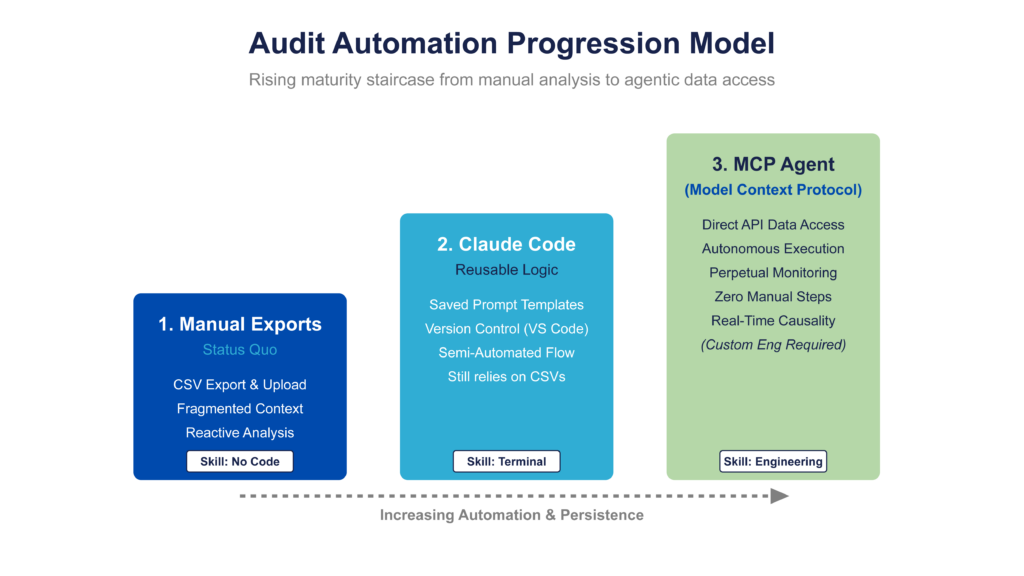

Claude turns one-time audits into repeatable systems by removing the need to rebuild analysis and re-export data each time. With saved workflows (Claude Code) and direct data access (Model Context Protocol), the same audit logic can be reused and run continuously instead of starting from scratch.

The export-based workflow, analyze, diagnose, monitor, is enough to control most accounts. But it’s still manual: every time you want to re-run the audit, you have to export data, upload it, and rebuild the same prompts and scripts.

That repetition is the constraint.

Claude Code removes it by giving you a way to save and version your audit logic as executable code. Claude Code is a terminal-based agentic coding tool from Anthropic, also available in VS Code, JetBrains, desktop, and browser. It reads your project files, writes and edits code, runs commands, and commits changes. For the audit use case, you’d create a project directory with your audit prompt templates as markdown files, Python or JS analysis scripts, and a CLAUDE.md file describing the workflow. Claude Code can then re-run the full audit pipeline on new data exports without rebuilding from scratch. In Q1 2026, Anthropic shipped Auto Mode, which lets Claude Code execute multi-step workflows with less manual intervention, relevant for chaining audit steps end to end.

This requires comfort with a terminal or IDE. It’s not a no-code solution. Think of it as the workflow for teams with engineering support or technically inclined operators.

Model Context Protocol (MCP) addresses the other bottleneck: data access. Instead of manually exporting CSVs from Google Ads, MCP allows Claude to connect to external data sources and pull datasets directly.

However, as of April 2026, there is no official Google Ads MCP server. The MCP ecosystem includes official servers for GitHub, Stripe, Slack, HubSpot, Linear, Sentry, Figma, and others, but Google Ads is not among them. Teams that want direct API access would need to build a custom MCP server wrapping the Google Ads API, a non-trivial engineering project.

A more practical intermediate step is using Claude Code with Python scripts that call the Google Ads API directly (via Google’s google-ads Python library). This lets you automate data pulls without manual CSV exports, while stopping short of a full MCP integration.

The analysis doesn’t change. What changes is the workflow:

- Claude Code = reusable audit logic

- MCP = direct data access (when available for your stack)

Together, they remove the manual steps and turn a one-time audit into a repeatable system.

Most teams don’t start here. They begin with exports, validate the workflow, and then layer in integrations once the process is stable.

How to Implement Monitoring Scripts in Google Ads Using Claude

Claude turns audit findings into monitoring scripts that run inside Google Ads and alert you when issues return. This makes it possible to move from one-time fixes to ongoing checks that catch regressions early, using scripts generated from the same logic uncovered in the audit.

The workflow is simple:

Audit → Identify failure pattern → Generate script → Deploy → Monitor continuously

At this stage, the goal isn’t more analysis, it’s persistence. Instead of re-checking the same issues manually, you define a condition once (e.g., brand terms in non-brand campaigns) and let a script monitor for it on a schedule.

Claude handles the translation from insight to execution. Given a clearly defined condition, it generates a Google Ads script that checks recent data and flags violations automatically.

These scripts run inside Google Ads → Tools → Scripts, where they are pasted, tested in Preview mode, and scheduled (daily or weekly). When a condition is triggered, the script sends an email alert with the relevant data, for example, the queries, campaigns, and spend involved.

The constraint is execution environment limits. Google Ads scripts for single accounts have a 30-minute runtime cap (60 minutes for MCC scripts using executeInParallel). Scripts that attempt to analyze large datasets (e.g., 100K+ search term rows for N-gram analysis) will time out.

What makes this worse: scripts that time out fail silently. They just stop. There’s no error message, no alert, no notification that the script didn’t complete. But here’s the critical part: changes made before the timeout are still applied. If your script pauses campaigns or adjusts bids in a loop, a timeout can leave your account in a partially modified state with no record of what completed and what didn’t.

Other constraints to know:

- No npm packages. Everything must be vanilla JavaScript using Google’s built-in services. If Claude generates a script using require() or import, it won’t work. Tell Claude explicitly: “Write for Google Ads Scripts, vanilla JS only, no external libraries.”

- UrlFetchApp quotas. Email alerts and HTTP calls use UrlFetchApp, which has a daily limit of 20,000 calls for consumer Google accounts (100,000 for Workspace accounts).

- No built-in failure alerting. If a script fails or times out, you won’t know unless you build monitoring into the script itself. Log to a Google Sheet or send a “heartbeat” email on successful completion so absence of the email signals failure.

To prevent timeouts, scripts must be scoped deliberately using pre-filters (e.g., .withCondition(“Clicks > 0”)), shorter lookback windows, and defined campaign subsets so the query returns a manageable dataset that can complete within the execution window. Add a row-count check at the top: if the result set exceeds a threshold (e.g., 50K rows), bail early and alert rather than attempting to process and timing out silently.

Script Example — Daily Brand Leakage Monitor

This script checks whether brand queries are triggering in non-brand campaigns and emails flagged violations.

Paste this into Claude:

Write a Google Ads script in JavaScript that checks for brand leakage once per day.

The script should:

- Look at search term data from the last 24 hours

- Identify any queries containing these brand terms: [INSERT BRAND TERMS]

- Check whether those queries appeared in campaigns that are NOT in this approved brand campaign list: [INSERT BRAND CAMPAIGN NAMES]

- If violations are found, send an email to [INSERT EMAIL ADDRESS]

- The email should include campaign name, search query, clicks, cost, and conversions

- If no violations are found, do nothing

Requirements:

- Write the script for Google Ads Scripts, vanilla JS only, no external libraries

- Keep the query scope narrow enough to avoid timeouts

- Add comments explaining each section

- Include any setup steps needed before deployment

Claude should return a script you can paste into Google Ads → Tools → Scripts, test in Preview mode, and schedule daily.

Script Example — Weekly N-Gram Cost Alert

This script scans recent search term data, aggregates N-grams, and flags new high-cost patterns with no conversions.

Paste this into Claude:

Write a Google Ads script in JavaScript that runs once per week and identifies wasted N-grams in search term data.

The script should:

- Pull search term data from the last 7 days

- Break queries into bi-grams and tri-grams

- Aggregate cost, clicks, and conversions by N-gram

- Flag any N-gram with more than [INSERT SPEND THRESHOLD] in cost and zero conversions

- Send an email to [INSERT EMAIL ADDRESS] with a summary of flagged N-grams

- Include each N-gram, total cost, clicks, and conversions in the email

Requirements:

- Write the script for Google Ads Scripts, vanilla JS only, no external libraries

- Keep the logic efficient enough to avoid execution timeouts

- Use a limited lookback window and summarized output

- Add comments explaining each section

- Include any setup steps needed before deployment

This is the easiest way to turn the earlier N-gram audit into an ongoing control mechanism.

Script Example — Daily Conversion Action Health Check

This script checks whether key conversion actions are missing volume, failing to import, or behaving abnormally, then emails an alert.

Paste this into Claude:

Write a Google Ads script in JavaScript that monitors conversion action health once per day.

The script should:

- Check conversion data from the last 24 hours

- Monitor these conversion actions: [INSERT CONVERSION ACTION NAMES]

- Flag any action with zero conversions when it normally receives volume

- Flag any major day-over-day drop in conversion volume above [INSERT THRESHOLD]

- Send an email to [INSERT EMAIL ADDRESS] if any issues are found

- The email should include conversion action name, current volume, expected threshold, and type of issue detected

Requirements:

- Write the script for Google Ads Scripts, vanilla JS only, no external libraries

- Keep the script scoped tightly enough to avoid timeouts

- Add comments explaining each section

- Include any setup steps needed before deployment

This is especially useful for accounts that rely on offline imports or CRM-based conversion tracking, where signal loss can quietly degrade bidding performance.

Implementing Claude Google Ads Audits

Claude doesn’t introduce new levers in Google Ads; it makes them actually usable at scale. Instead of working through fragmented reports and partial views, it forces your data to connect, surfaces patterns you wouldn’t catch manually, and turns those findings into something you can act on immediately.

The real shift is speed and persistence. Claude compresses analysis into a single pass and then extends it into monitoring so you’re not just finding problems faster, you’re preventing them from returning.